Edge AI Inference Modules: The Crucial Link for Low-Latency Autonomy

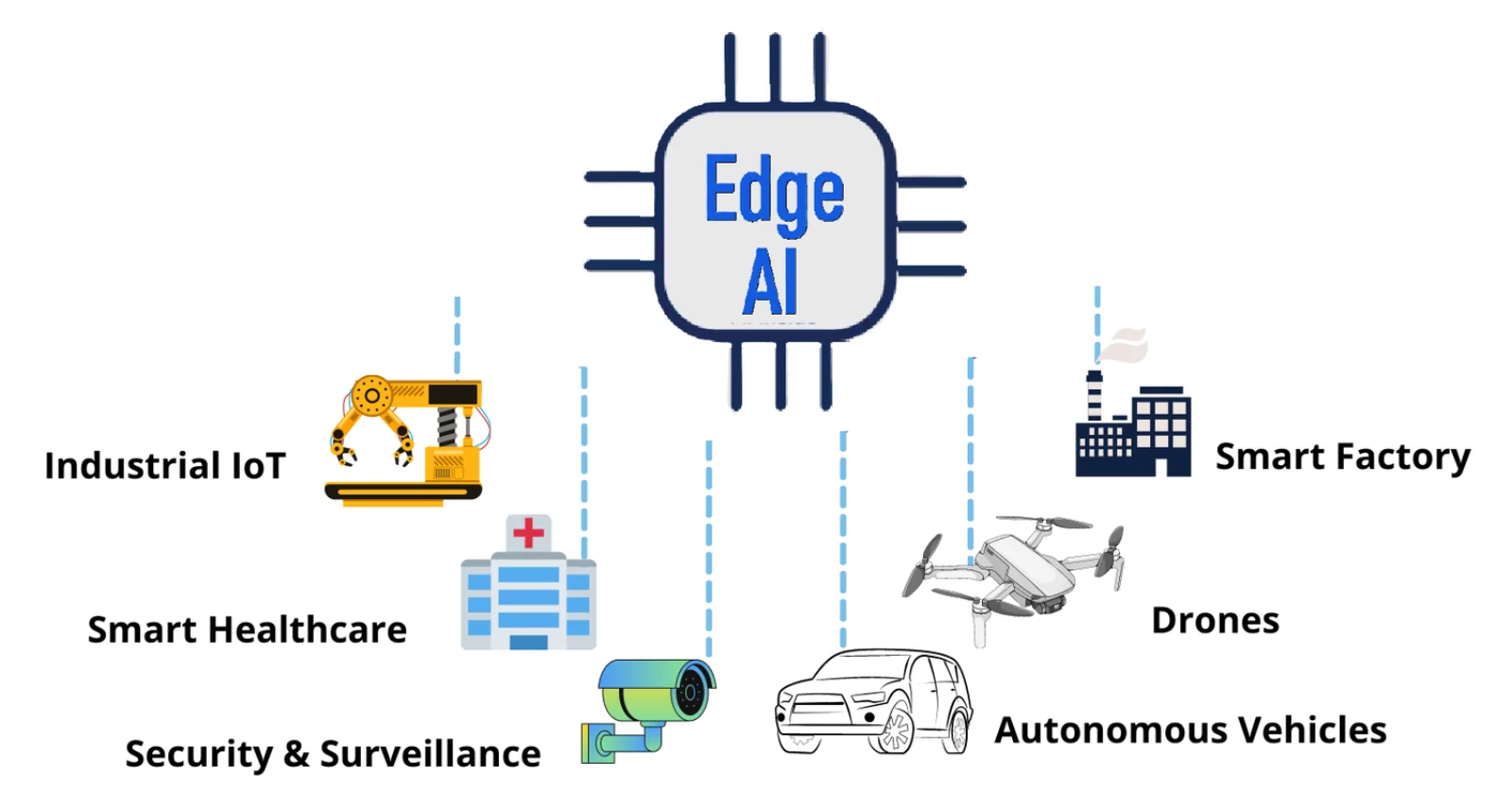

In the world of autonomy, every millisecond matters. When a self-driving car in the US needs to identify a pedestrian or a factory robot in China must halt due to an obstruction, waiting for data to travel to a cloud server and return with a decision is not an option—it’s a...

The Nuance of Control: How AI-Based Stacks Enable Human-Like Reactions in Robotics

The difference between a clunky, pre-programmed industrial robot and a fluid, general-purpose humanoid is the intelligence of its Control Stack. At Robotonomous, we have engineered AI-based control stacks that go beyond simple commands, enabling machines to exhibit nuanced,...

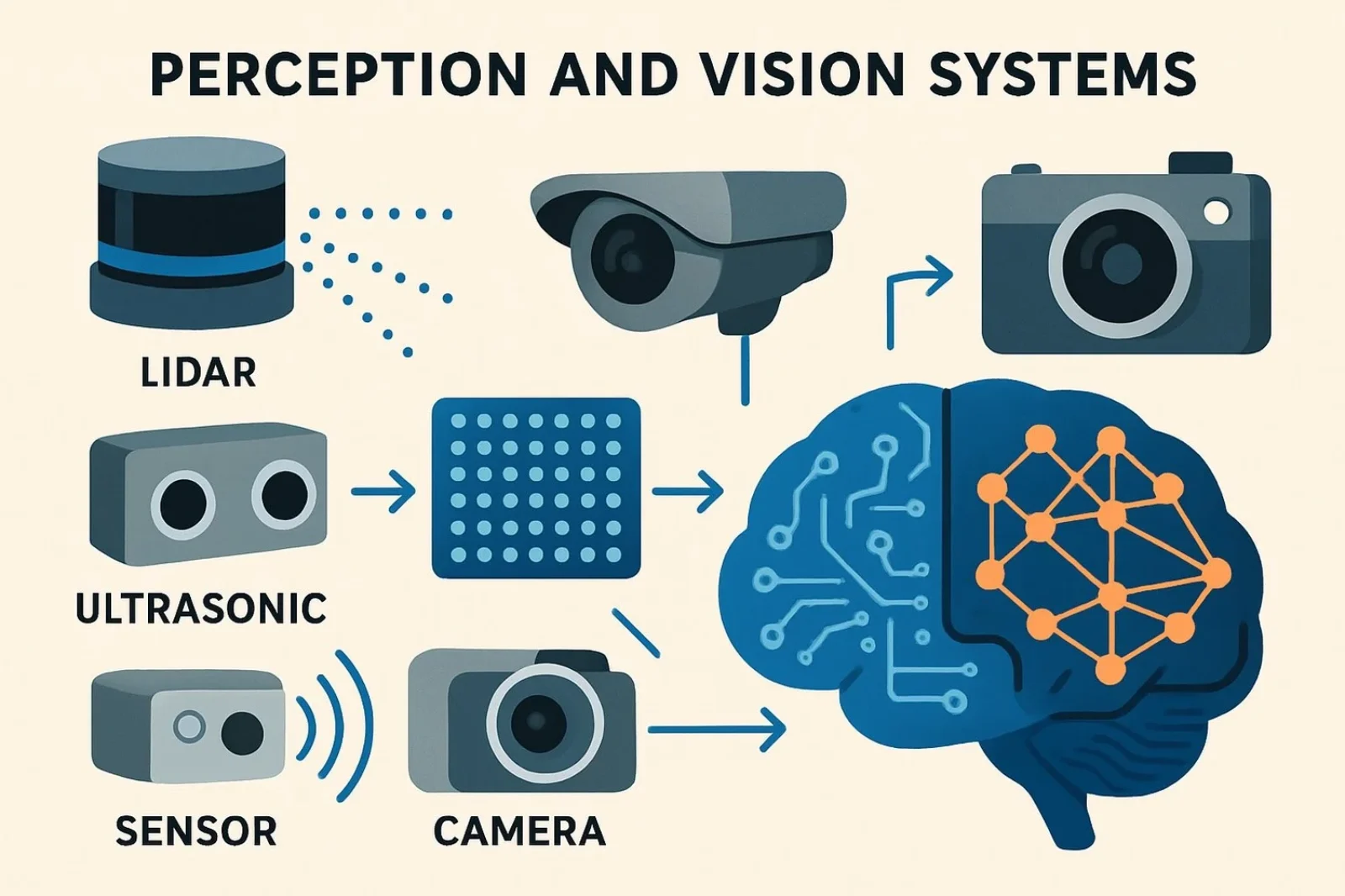

The Foundation of Full-Stack Autonomy: Mastering Sensor Fusion and Environmental Perception

A machine is only as intelligent as its perception of the world. For truly autonomous operations—from a warehouse robot maneuvering tight aisles to a self-driving truck navigating dense US traffic—a comprehensive, accurate understanding of the environment is non-negotiable....

Beyond the Lab: How Digital Twins Ensure Real-World Readiness for Autonomous Fleets

The transition from a successful autonomous vehicle (AV) prototype in the lab to a safe, regulatory-compliant fleet on the public roads of London, Toronto, or Shenzhen is one of the industry’s greatest challenges. The key to unlocking this massive potential and...

The LTA Revolution: Why Next-Generation Robotics Demand Learning, Training, and Autonomy Systems

At Robotonomous, we believe the future of machines isn’t about being pre-programmed; it’s about being intelligent. This shift is driven by the rise of Learning, Training, and Autonomy (LTA) Systems. These integrated frameworks are the foundational...

Perception SDK: Accelerating the Journey from Pixels to Insights

PerceptionYou’ve probably heard of Tesla’s autonomous vehicles. Ever wondered how they understand their surroundings? The answer lies in perception that is the ability of machines to interpret the environment through vision and sensors. In robotics, perception is what enables...

What is Robotic Manipulation & Dexterity and what is the latest in this field

Exploring Robotic Manipulation and DexterityRobotic manipulation and dexterity are fascinating fields that focus on a robot’s ability to interact with objects in a way that mimics human performance. This involves tasks like grasping, moving, assembling, or manipulating...

Jetson Orin platform and Edge AI inference plugin

The Jetson Orin platform from NVIDIA is a high-performance, energy-efficient edge computing solution designed for real-time AI inference, robotics, and sensor fusion tasks. The ecosystem supports plug-and-play integration with most popular Edge AI inference plugins and...

Perception SDK

What “Perception SDK” means in industry (short)A perception SDK is middleware + libraries that let engineers convert raw sensor data (cameras, LiDAR, radar, IMU) into usable scene representations (2D/3D detection, tracking, semantic maps, occupancy, BEV, etc.). In industry...

Edge AI inference Module and Market

NVIDIA is a powerhouse in edge AI, especially for high-performance robotics and autonomous systems. Its Jetson Orin platform delivers up to 275 TOPS, making it ideal for demanding tasks like 3D perception and real-time object detection. Jetson AGX Orin kits are widely used...