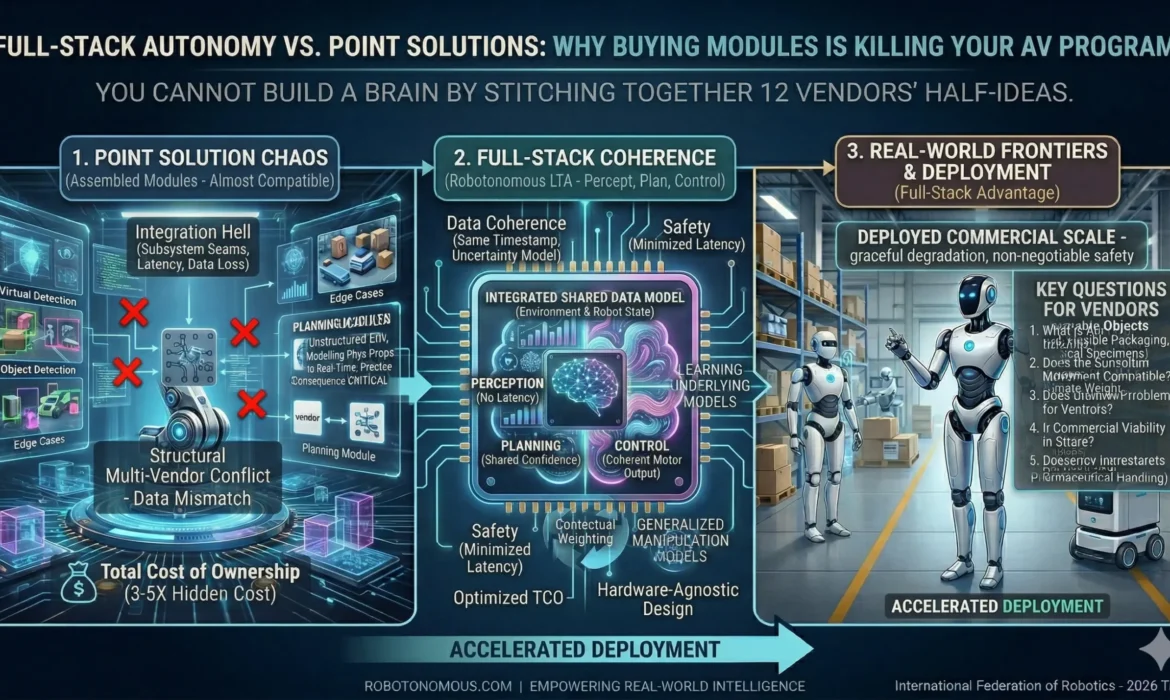

“You cannot build a brain by stitching together 12 vendors’ half-ideas.”

The integration hell no one warns you about

Most autonomous vehicle and robotics programs do not fail because the technology does not work. They fail because the technology does not work together. A perception module from one vendor. A planning layer from another. A control stack from a third. Data formats that are almost compatible. Latency tolerances that almost align. Failure modes that each vendor’s documentation almost covers. Almost is the word that defines the experience of most teams building autonomy from assembled point solutions.

The symptoms emerge gradually: integration timelines that slip by months. Performance that is inexplicably worse than the sum of individual component benchmarks. Edge cases that fall into the seams between subsystems — where the perception layer’s output format does not quite match the planning layer’s input assumptions, and the result is a decision-making failure that neither vendor will take responsibility for.

Why perception, planning, and control must share a data model

The fundamental technical argument for full-stack autonomy is not convenience — it is data coherence. In a well-engineered full-stack system, perception, planning, and control operate on a shared, continuously updated representation of the robot’s state and environment. When the perception layer detects an obstacle, the planning layer does not receive a translated, formatted, latency-delayed copy of that detection — it accesses the same internal state, with the same timestamp, the same confidence metrics, and the same uncertainty model.

In a multi-vendor stack, this coherence is structurally impossible. Data must be serialised, transmitted over an interface, deserialised, and interpreted by a system that was not designed with knowledge of how the sending system represents uncertainty and confidence. Every translation is a potential source of information loss. Every interface is a potential source of latency. And latency in a real-time autonomy system is not a performance issue — it is a safety issue.

The real cost of a multi-vendor autonomy stack

Procurement teams comparing autonomy solutions on a component-by-component basis typically undercount the cost of integration by a factor of three to five. The licence fees for individual components are visible and comparable. The engineering time required to build, maintain, and debug the interfaces between those components is not in any vendor’s pricing sheet. Neither is the cost of the dedicated integration engineer who becomes the permanent owner of the seams between systems. Neither is the delay to deployment caused by a compatibility issue discovered six months into the programme.

Organisations that have run both approaches — assembling best-of-breed components versus deploying a full-stack platform — consistently report that total cost of ownership for assembled stacks exceeds full-stack platforms by a significant margin once integration labour, deployment delay, and ongoing maintenance are included. The component-by-component approach optimises for the visible cost while ignoring the invisible cost that ultimately dominates the programme budget.

What hardware-agnostic full-stack actually means

A common misconception about full-stack autonomy platforms is that they are hardware lock-in by another name. This conflates vertical integration with proprietary dependency. The Robotonomous LTA platform is hardware-agnostic by design — the same perception, planning, and control stack runs across different sensor configurations, actuator types, and vehicle platforms — because locking customers to specific hardware would limit the addressable market and create the same integration problems the platform is designed to solve.

What hardware-agnostic full-stack means in practice is that the interfaces between subsystems are internal, coherent, and maintained by a single engineering team with visibility across the entire stack. When a sensor is upgraded, the implications for the planning layer are understood and handled by the same team. When a new actuator platform is supported, the control stack adaptation is engineered with full knowledge of the perception and planning layers it must serve.

Questions to ask any autonomy vendor before signing

If your team is evaluating autonomy software — whether a full-stack platform or individual components — five questions will reveal more about real-world performance than any benchmark sheet. First: what is your data model for environment representation, and how do your subsystems share state? Second: who owns the interface between your perception and planning layers? Third: what is your documented process for handling edge cases that fall between subsystem boundaries? Fourth: can you provide references from programmes that have operated at commercial scale, not just pilot deployments? Fifth: what does your failure mode look like when one subsystem degrades — does the stack fail gracefully or catastrophically?

The answers to these questions separate systems that have been engineered as coherent wholes from systems that have been assembled from capable but independent parts. For any autonomous vehicle or robotics programme where real-world performance and safety are non-negotiable, that distinction is the most important factor in vendor selection.