“Picking up a water glass should be trivial. For robots, it is still a holy grail.”

The problem that embarrasses robotics engineers

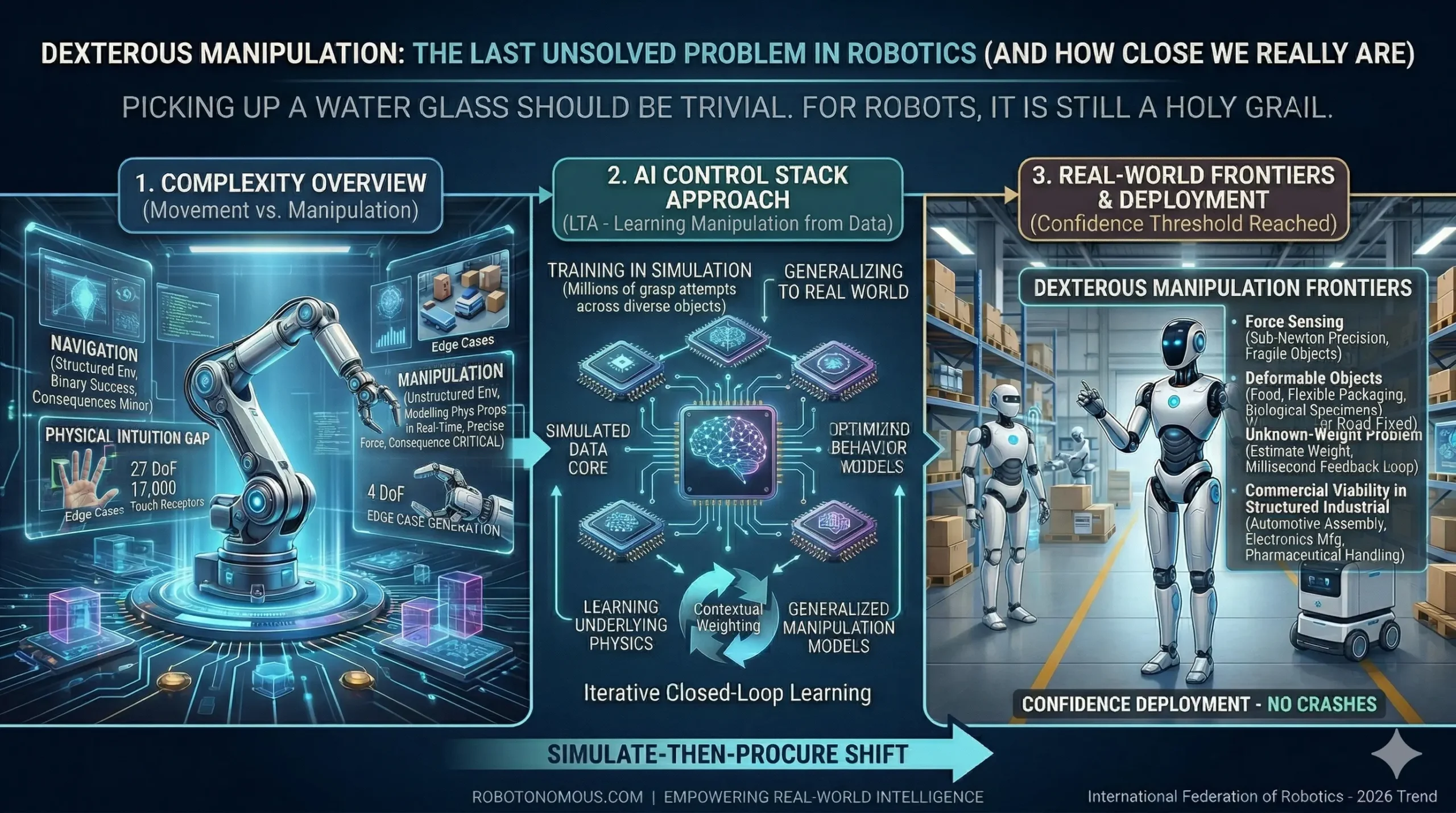

Ask a robotics engineer what their field’s greatest unsolved challenge is, and many will give you an answer that surprises non-engineers: picking things up. Not navigating complex environments. Not processing sensor data at speed. Not making real-time decisions under uncertainty. The hardest open problem in applied robotics is dexterous manipulation — the ability to handle physical objects with the kind of reliable, adaptive precision that humans exercise thousands of times every day without conscious thought.

Amazon’s chief roboticist called it a holy grail at Davos 2026. The World Economic Forum’s panel on physical AI identified object manipulation as the critical frontier separating today’s capable but brittle industrial robots from the general-purpose physical AI systems the industry is working toward. Understanding why this problem is so hard — and how close the field actually is to solving it — matters for anyone deploying or designing autonomous systems in 2026.

Why manipulation is orders of magnitude harder than movement

A robot that navigates a warehouse faces a well-defined problem: move from point A to point B without hitting anything. The environment is relatively structured, the success criteria are binary, and the consequences of a minor error are usually minor. A robot that must pick up an object faces something fundamentally different: it must model the object’s physical properties in real time — its weight, rigidity, surface friction, centre of mass — using only the sensory information available at the moment of contact, and it must apply precisely calibrated force to avoid crushing, dropping, or misaligning the object.

Human hands solve this problem with 27 degrees of freedom, 17,000 touch receptors per square centimetre of fingertip, and a lifetime of learned physical intuition operating below conscious awareness. Current robotic grippers, even sophisticated multi-fingered end-effectors, approximate a fraction of this capability. The gap is not primarily mechanical — it is the AI control stack that needs to bridge the distance between sensor input and motor output with human-like adaptiveness.

The AI control stack approach: learning manipulation from data

The most significant recent progress in dexterous manipulation has come not from mechanical innovation but from applying large-scale machine learning to the manipulation problem. Rather than programming explicit grasping strategies for every object type, modern robotic manipulation systems are trained on massive datasets of successful and failed grasps — learning, through exposure, the relationship between object appearance, contact physics, and motor command.

The Robotonomous AI-based control stack applies this approach within the LTA architecture. Manipulation policies are trained in high-fidelity simulation — generating millions of grasp attempts across diverse object types, surface properties, and orientations without the time and hardware cost of physical trials. The resulting models generalise to real-world objects with a robustness that rule-based approaches cannot match, because the system has learned the underlying physics rather than memorised a finite set of object-specific strategies.

Force sensing, deformable objects, and the unknown-weight problem

Three specific sub-problems define the current frontier of dexterous manipulation research and deployment. Force sensing addresses the need to modulate grip force dynamically — applying enough force to secure an object without damaging it, adjusting continuously as the robot’s understanding of the object’s properties is refined through contact. For fragile objects, this requires sub-Newton precision. For deformable objects — food products, flexible packaging, biological specimens — it requires modelling how the object will change shape under applied force.

The unknown-weight problem is particularly challenging in unstructured environments. A robot that must pick up objects it has never encountered before cannot rely on a database of known masses. It must estimate weight from visual appearance and then immediately correct its force model based on the feedback it receives in the first milliseconds of lift. This requires not just a capable actuator but an intelligent feedback loop between perception, estimation, and motor control operating at millisecond timescales.

Where dexterous manipulation stands in 2026 — and what comes next

In structured industrial environments with known object types, robotic manipulation has reached commercial viability. Automotive assembly, electronics manufacturing, and pharmaceutical handling are all seeing productive deployments of AI-enabled manipulation systems. The remaining frontier is unstructured environments — the retail warehouse picking problem, the domestic robot handling arbitrary household objects, the surgical robot operating on patient anatomy that varies continuously.

Robotonomous’ position at this frontier is defined by the integration of manipulation capability within the full LTA stack. Manipulation is not treated as an isolated capability but as one layer of a complete autonomous system — informed by the same sensor fusion pipeline that enables environment understanding, trained in the same high-fidelity simulation environment that validates full-system behaviour, and governed by the same agentic decision-making layer that handles unexpected situations. This integration is what will close the gap between manipulation in controlled conditions and manipulation in the real world.