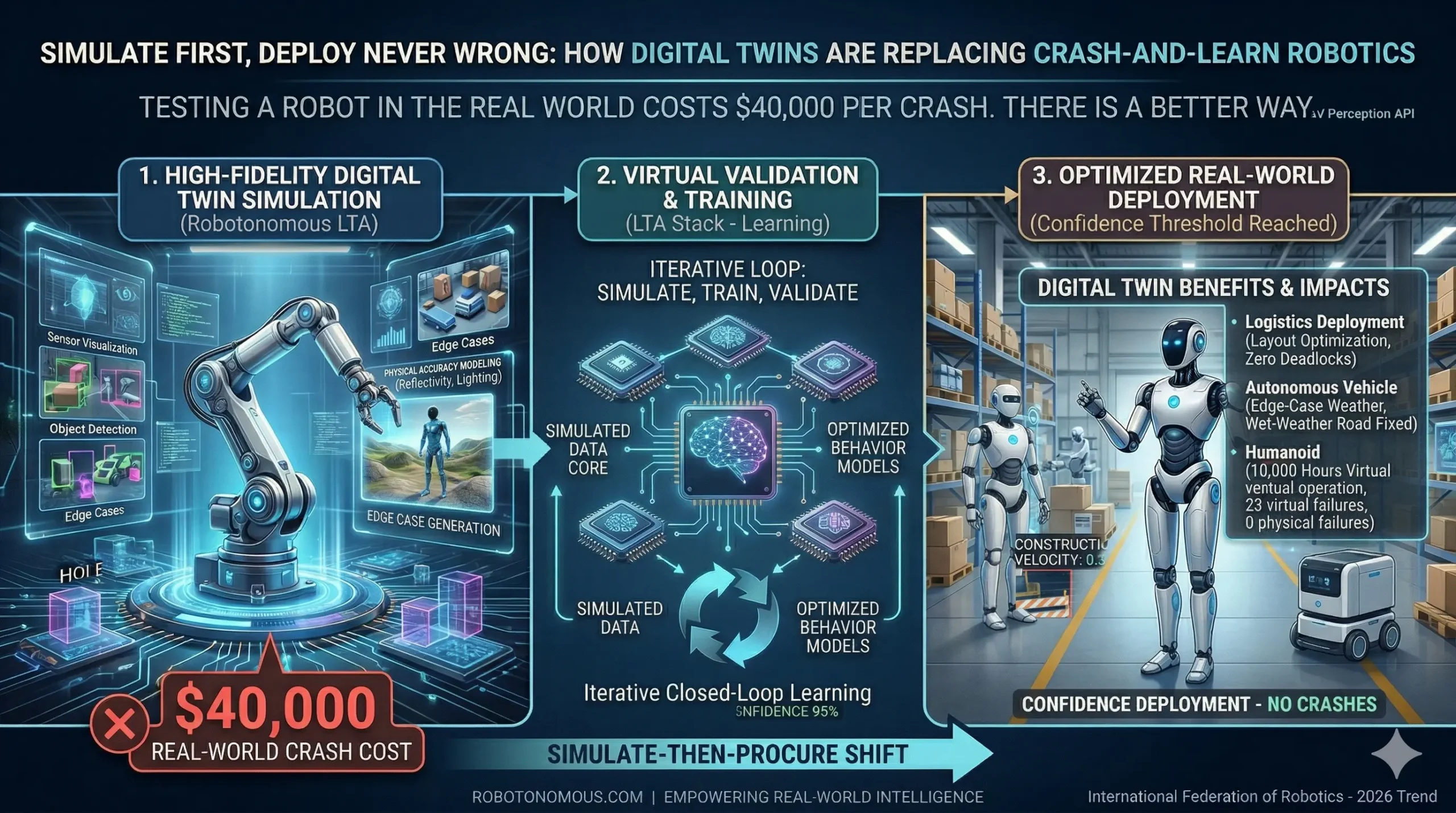

“Testing a robot in the real world costs $40,000 per crash. There is a better way.”

The hidden cost of testing robots in the real world

The robotics industry has a testing problem it rarely talks about publicly. Every time a robot or autonomous vehicle encounters a scenario its training data did not cover — an unexpected obstacle, an unusual surface, an edge-case sensor reading — the results range from mission abort to physical damage to, in the worst cases, safety incidents. The cost of a single real-world failure in a commercial robotics deployment is not just the repair bill. It is downtime, lost production, liability exposure, and the engineering hours required to diagnose what went wrong.

For autonomous vehicle programs, the numbers are even more stark. Physical test fleets are expensive to operate. Public road testing is legally and logistically complex. And every kilometre driven without a failure is still not statistically sufficient to validate safety for rare but catastrophic edge cases — scenarios that might occur once in a million kilometres but that must be handled correctly every single time.

What high-fidelity simulation actually means

When engineers say ‘simulation,’ they do not all mean the same thing. A basic physics engine that models rigid body dynamics is simulation. So is the kind of photorealistic, sensor-accurate, computationally precise digital twin environment that Robotonomous uses for LTA system validation. The difference in capability between these two is not a matter of degree — it is a matter of kind.

High-fidelity simulation reproduces not just the geometry of a robot’s operating environment but the full sensor experience of operating within it. LiDAR point clouds that reflect real material properties. Camera feeds with accurate lighting, lens distortion, and atmospheric effects. Radar returns that model surface reflectivity correctly. This level of physical accuracy means that a perception model trained and validated in simulation behaves predictably when deployed on hardware — because the gap between the simulated environment and reality has been methodically closed.

The simulate-then-procure shift: why the industry is changing now

Industry analysts in 2026 are documenting a fundamental shift in how manufacturing and logistics organizations approach robotics investment. The old model — specify requirements, purchase hardware, run trials, discover problems, iterate — is being replaced by a simulate-then-procure methodology. Before a single dollar is committed to physical hardware, the entire deployment scenario is built, tested, and stress-tested in a digital twin environment.

This shift is driven by three converging pressures: the rising cost of capital makes speculative hardware investments increasingly difficult to justify. The complexity of modern robotics deployments means that integration failures are expensive and slow to diagnose. And the maturity of simulation platforms — particularly high-fidelity tools like those underlying the Robotonomous digital twin framework — has reached the point where simulation results are genuinely predictive of physical performance.

Three real scenarios where digital twins prevented failure

In a logistics deployment, a client used Robotonomous simulation tools to test an autonomous mobile robot fleet across 47 distinct warehouse configurations before selecting their final floor layout. Simulation revealed that one planned aisle configuration created a deadlock condition under high traffic load — a problem that would have required physical reconfiguration post-installation. Identified and resolved in simulation: zero real-world downtime.

In an autonomous vehicle validation program, edge-case weather scenarios — heavy rain combined with low sun angle — were replicated in simulation to validate sensor fusion behaviour before any wet-weather road testing was approved. Simulation identified a specific LiDAR-camera conflict mode that required a fusion weight adjustment. The fix was deployed in software before the first physical test drive in adverse conditions.

In a humanoid robot deployment for a manufacturing client, digital twin simulation ran the robot through 10,000 hours of virtual operation — equivalent to over a year of real-world runtime — in under two weeks of cloud compute time. Failure modes discovered and resolved in simulation: 23. Failure modes discovered in physical deployment: zero.

How Robotonomous digital twins connect to the full LTA stack

The Robotonomous high-fidelity simulation environment is not a standalone testing tool. It is the training ground for the Learning layer of the LTA architecture. Every simulation run generates training data — sensor readings, environmental states, robot decisions, outcomes — that feeds directly into the model training pipeline. The digital twin and the autonomy system improve together, iteratively, until deployment confidence reaches the threshold required for real-world operation.

This closed-loop architecture — simulate, train, validate, simulate again — is what allows Robotonomous clients to deploy autonomous systems with a level of validated confidence that crash-and-learn robotics programs simply cannot match.