“Every sensor sees something. The real challenge is making them agree.”

The perception myth the AV industry doesn’t talk about

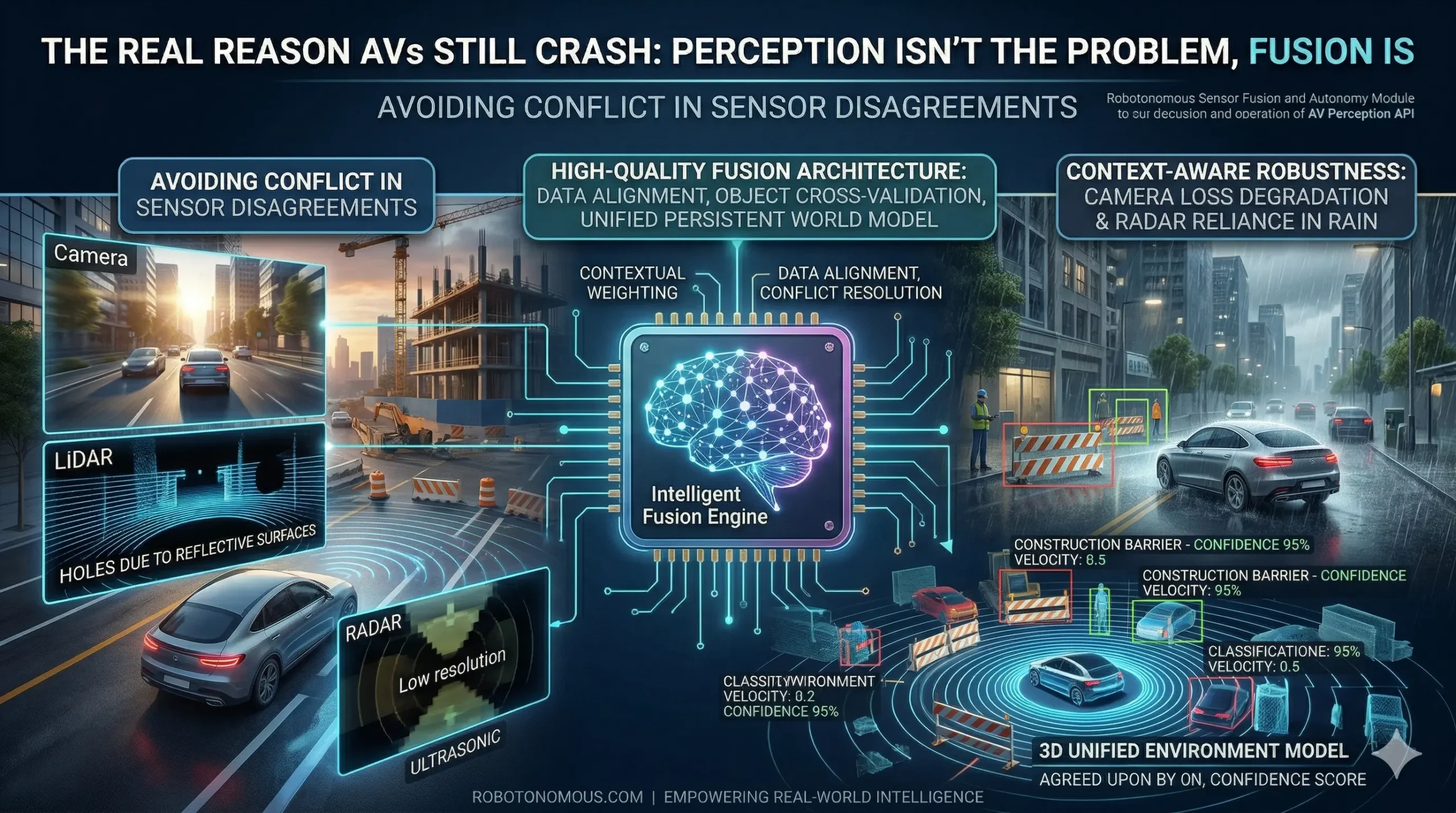

When an autonomous vehicle makes a mistake, the first assumption is almost always the same: a sensor failed. And that assumption is almost always wrong. Sensors — LiDAR, cameras, radar, and ultrasonic arrays — have become remarkably reliable. The real failure point is almost never what a sensor sees. It is what happens when multiple sensors disagree about what they are seeing, and the system has no intelligent way to resolve the conflict.

This is the sensor fusion problem, and it is responsible for the majority of real-world AV edge-case failures in 2026. Understanding it is the difference between building an autonomous vehicle that performs in a lab and one that performs in the rain, at dusk, at a construction site, when one of your sensors is partially obscured.

Why single-sensor autonomy always breaks

Camera-only systems are brilliant in good lighting and terrible in low light, fog, or direct glare. LiDAR gives precise 3D geometry but struggles with highly reflective surfaces and produces no color data. Radar penetrates weather and darkness but has low spatial resolution and cannot identify object type with confidence. Each sensor has a domain where it excels and a domain where it fails. Autonomous vehicles that rely on any single sensor modality are, in effect, driving with one eye closed.

The AV systems that achieve genuine real-world robustness — the ones that pass regulatory thresholds, that operate commercially without safety drivers — all use multi-modal sensor fusion. Not as a nice-to-have. As a foundational architectural requirement.

What high-quality sensor fusion actually looks like

Effective sensor fusion is not simply combining sensor feeds. It is a structured process of data alignment, conflict resolution, and unified environment modeling. At the raw data level, sensor streams are time-synchronized and spatially calibrated into a shared coordinate frame. At the object level, detection outputs from each sensor are cross-validated — objects confirmed by multiple modalities receive higher confidence scores than those seen by only one. At the environment level, a persistent world model is maintained and updated in real time, tracking object state even during brief sensor dropout.

The Robotonomous sensor fusion and autonomy module is built on this three-layer architecture. When a camera loses visibility due to glare and radar detects an object at the same coordinates, the system does not freeze or fail — it degrades gracefully, weighting the higher-confidence modality while flagging the degraded channel for monitoring. The result is a system that continues operating reliably in conditions where single-modality systems would produce dangerous uncertainty.

The edge case problem: what happens when sensors disagree

The hardest test for any fusion system is not nominal operation. It is sensor conflict — the scenario where two reliable sensors return genuinely different readings for the same object. A classic example: a large reflective truck trailer that radar detects at 30 meters but LiDAR returns inconsistent point clouds from due to surface reflectivity. Camera detects the trailer clearly, but the two-vs-one conflict in the fusion layer needs an intelligent resolution strategy, not a simple voting mechanism.

Robotonomous addresses this through contextual weighting — the system understands which sensor modality is most reliable in the current environmental conditions and dynamically adjusts confidence weights accordingly. In rain, radar is upweighted. In clear daylight with high feature density, camera and LiDAR dominate. This context-awareness is what separates a production-grade fusion system from a research prototype.

From sensor stream to decision: the complete pipeline

The output of a well-engineered fusion pipeline is not a collection of sensor readings. It is a single, unified representation of the operating environment that the planning and control systems can act on with confidence. For Robotonomous clients integrating with our autonomous vehicle perception API, this means receiving a continuously updated 3D environment model — with object classifications, velocity vectors, confidence scores, and occlusion flags — rather than raw sensor data that each downstream system must interpret independently.

This is the architectural shift that makes the difference between an AV program that works in demos and one that works on public roads.