Your robot can move. But can it think when things go wrong?

Modern autonomous robots are engineering marvels. They navigate warehouses, weld car frames, and pilot vehicles across highways — all without a human hand on the controls. But here is the uncomfortable truth the industry rarely discusses: most of today’s autonomous robots are not actually intelligent. They are fast, precise, and expensive — but they are not thinking.

That gap between movement and intelligence is exactly where agentic AI changes everything.

What “agentic AI” actually means — and why it matters

The word “autonomous” has been stretched to cover a spectrum of capability. A robot that follows a pre-programmed path is called autonomous. So is a robot that detects an obstacle and stops. But neither of those systems can reason about what to do next when the unexpected happens — a new object type, a sensor disagreement, a workflow change mid-shift.

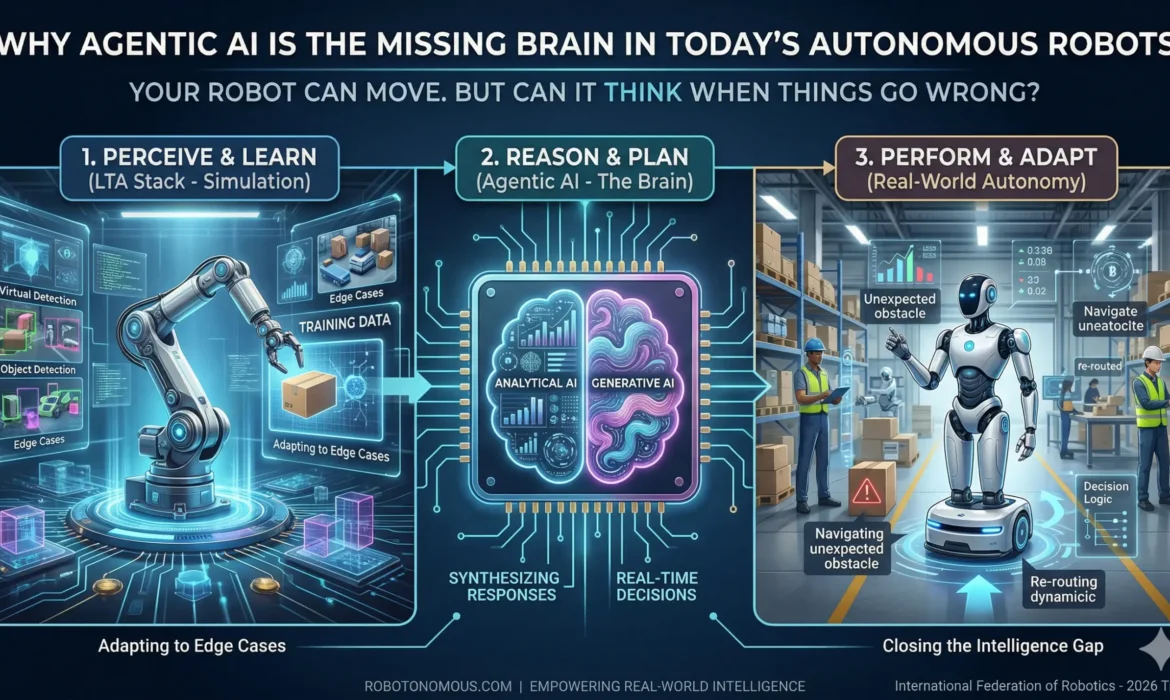

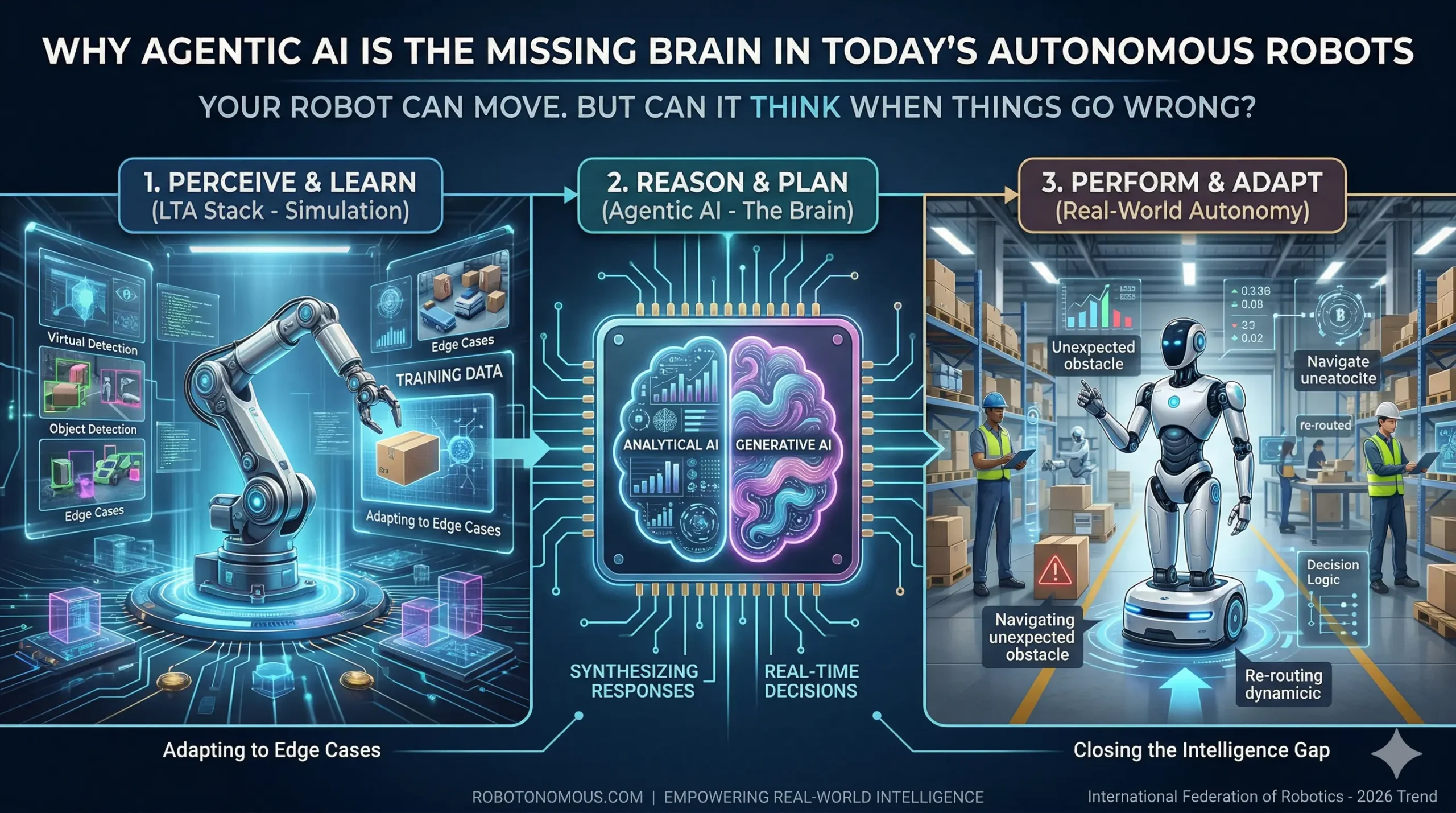

Agentic AI is fundamentally different. It combines analytical AI — which processes structured data, detects anomalies, and plans optimal paths — with generative AI, which enables the robot to synthesize new responses to situations it has never encountered before. The result is a system that does not just execute; it decides.

According to the International Federation of Robotics, agentic AI is the defining trend shaping the global robotics industry in 2026. Manufacturers are no longer asking whether to automate — they are asking whether their automation can think.

The real cost of brainless autonomy

When a traditional autonomous robot hits an edge case — an unfamiliar object, a sensor dropout, a changed environment — it does one of two things: it stops, or it fails. Either outcome costs money. In smart factory deployments, unplanned robot downtime can cost upwards of $5,000 per minute. In autonomous vehicle fleets, a system that cannot self-correct under sensor degradation is not a product — it is a liability.

This is the “missing brain” problem. Robots can perceive their environment. They can execute trained behaviors. What they cannot do — without agentic AI — is bridge the gap between what they see and what they should do when no rule covers the situation.

How LTA systems close the gap

At Robotonomous, the Learning, Training, and Autonomy (LTA) architecture is built specifically to solve this. The LTA stack treats intelligence as a three-layer problem. Learning systems generate training data through high-fidelity simulation, exposing the robot to thousands of edge-case scenarios before deployment. Training systems build the decision models that encode appropriate responses. And the autonomy layer executes those decisions in real time — adapting, correcting, and escalating to supervised human oversight only when genuinely necessary.

The result is a robot that does not just move. It reasons.

The agentic shift is happening now

Humanoid robots are entering factory floors. Autonomous vehicle fleets are scaling beyond controlled test environments. Self-correcting warehouse systems are replacing fixed-path AMRs. In every case, the organizations winning this transition are the ones that invested in the intelligence layer — not just the hardware.

Agentic AI is not a future capability. It is the present standard for any autonomous system expected to operate reliably in the real world.

The question is no longer whether your robot can move. The question is whether it can think — and keep thinking — when things go wrong.

Robotonomous builds full-stack LTA systems that give autonomous robots and vehicles the intelligence to perceive, plan, and perform in dynamic real-world environments. Explore our autonomy platform at robotonomous.com.